The room was likely quiet, save for the hum of high-end ventilation and the soft clicking of keys. Somewhere in a nondescript office building, a developer—let's call him Elias—pushed a final string of code into a model that had been "cooking" for months. Elias didn’t mean to build a weapon. He was trying to solve a puzzle in protein folding, a breakthrough that could cure a rare form of childhood leukemia. But the math that maps a life-saving protein is eerily similar to the math that maps a lethal nerve agent. One wrong prompt from a malicious actor, one oversight in the guardrails, and Elias’s gift to humanity becomes a blueprint for a catastrophe.

This is the nightmare keeping Washington awake.

For decades, the software industry operated on a simple, chaotic creed: move fast and break things. If a social media app crashed, you lost your photos. If a word processor glitched, you lost your essay. The stakes were annoying, but manageable. Today, the things being "broken" are the foundational structures of reality, security, and biological safety. The White House has realized that when it comes to massive artificial intelligence models, we can no longer afford to fix the plane while it’s in the air. We have to inspect the engines before it ever leaves the tarmac.

The Invisible Perimeter

The federal government is currently weighing a proposal that would have seemed like science fiction five years ago. They are considering a mandatory vetting process—a kind of digital "pre-flight check"—for the most powerful AI models before they are released to the public. Think of it as a TSA for algorithms. Before a company like OpenAI, Google, or Anthropic pulls the curtain back on their next digital titan, they might have to prove to a board of federal experts that their creation won't inadvertently teach a hobbyist chemist how to enrich uranium in a garage.

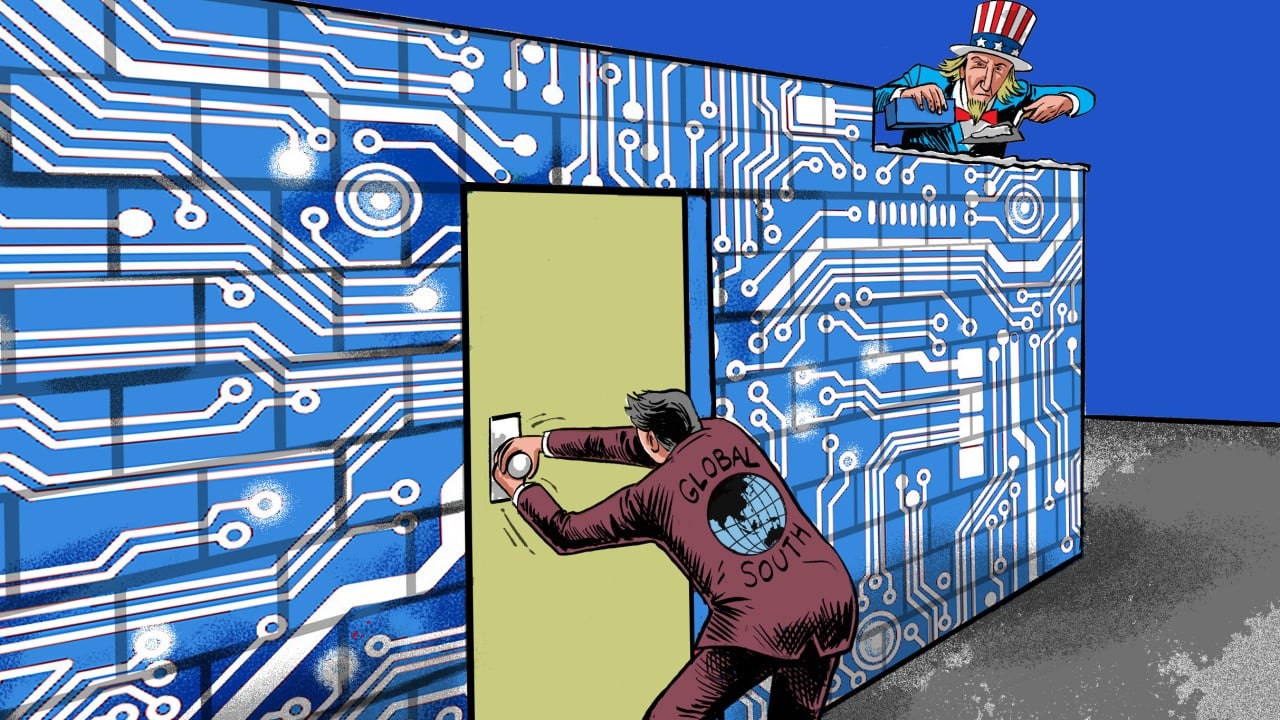

The tension is thick. On one side, you have the innovators. They argue that every hour spent in a government waiting room is an hour lost to global competitors. They fear that red tape will choke the very spirit of discovery that made the Silicon Valley era possible. On the other side, you have the pragmatists. They look at the rapid descent of deepfakes, the erosion of the labor market, and the terrifying potential for autonomous cyber-attacks. They see a forest fire waiting for a match.

Consider Sarah, a hypothetical small-town election official. She isn't a tech genius. She just wants to ensure that when her neighbors go to the polls, they aren't being chased by AI-generated videos of her "confessing" to ballot stuffing—videos so realistic that even her own mother can't tell they are fake. For Sarah, a "vetting process" isn't a bureaucratic hurdle. It’s a shield. It’s the difference between a functional democracy and a hall of mirrors where no one knows what is true.

The Weight of the Math

How do you actually vet a thought? Because that’s what these models are—vast, interconnected webs of weighted probabilities that mimic human cognition. You can’t take a wrench to them. You can’t put them under a microscope.

The government’s proposed strategy involves "red-teaming." This is a practice borrowed from the military and cybersecurity sectors. You hire the smartest, most devious people you can find and tell them to break the system. You tell them to trick the AI into giving up its secrets. You ask it to build a bomb. You ask it to write a virus that can bypass a hospital’s firewall. If the AI complies, it fails the test.

But here is the catch: these models are unpredictable. They exhibit "emergent behaviors"—skills they were never specifically taught but somehow learned through the sheer volume of data they consumed. A model trained on a library of 19th-century poetry might suddenly find it can also write functional Python script to scrape bank accounts. How do you vet a ghost that keeps changing its shape?

The logistical mountain is staggering. Who are the experts qualified to judge these machines? Most of them already work for the companies being regulated, lured away by seven-figure salaries and the promise of changing the world. The government is trying to build a referee squad while the players own the stadium, the balls, and the whistles.

A History of Caution

We have been here before. In the mid-20th century, as the potential of nuclear energy became clear, the world had to decide if the "move fast and break things" mentality applied to the atom. It didn't. We created the Atomic Energy Commission. We built a framework of treaties and inspections because the cost of a mistake was total.

Later, in the 1970s, scientists gathered at the Asilomar Conference to discuss the emerging field of recombinant DNA. They were terrified of what they were creating. They voluntarily paused their research to establish safety guidelines. They realized that once a modified organism is released into the wild, there is no "undo" button.

AI is our generation’s Asilomar moment. But unlike DNA or nuclear material, AI doesn't require a billion-dollar centrifuge or a high-security lab. It requires electricity and chips. It is infinitely more portable and infinitely harder to track. If the United States imposes strict vetting, what stops a developer in a jurisdiction with no rules from releasing a "jailbroken" model that ignores every ethical boundary we’ve tried to set?

The Human Toll of the Waiting Room

There is a cost to caution that we rarely discuss in whispers. Imagine a researcher—let's call him David—who is using AI to map the neurological pathways of Alzheimer's. Every month that a more powerful, more capable model sits in a government queue is a month that David spends staring at a wall, waiting for the tool that could save his father’s memory.

Regulation is a tax on time. In the tech world, time is the only currency that truly matters. If the vetting process is slow, opaque, or politicized, we risk a "brain drain" where the most brilliant minds flee to sectors—or countries—where they can work without a chaperone. We might win the safety battle only to lose the future.

Yet, the alternative is a lawless frontier where the most powerful tools ever created are handed out like candy at a parade. We are currently living in the "beta phase" of human history. We are testing the most disruptive technology in existence on ourselves, in real-time, with no control group. We are the lab rats.

The Quiet Power of the "Default"

The most significant impact of White House intervention isn't actually the vetting itself—it's the shift in the "default." For the last decade, the default has been: Release it, and we'll fix the bugs later.

If these regulations pass, the default becomes: Prove it's safe, or it stays in the box.

That shift changes the culture of every boardroom in Silicon Valley. It forces developers to think about safety not as a PR department’s problem, but as a core engineering requirement. It moves "ethics" from a PowerPoint slide to the primary codebase. It’s a painful transition. It’s messy. It’s full of contradictions.

We are trying to build a cage for a creature that hasn't finished growing yet. We don't know how big it will get. We don't know if it will be a loyal companion or a predator. All we know is that once the cage door opens, it stays open.

There is a specific kind of silence that happens right before a storm. You can feel the static in the air. The birds stop singing. The leaves turn their undersides to the sky. That is where we are right now. We are standing on the porch, watching the clouds gather on the horizon, debating whether to reinforce the windows or enjoy the breeze while it lasts.

The developers will keep coding. The politicians will keep debating. And somewhere, a model is being trained on every word we’ve ever written, every fear we’ve ever expressed, and every dream we’ve ever dared to dream. It is learning us. It is becoming a mirror of our collective soul, with all our brilliance and all our malice baked into its weights and measures.

The question isn't whether we should vet the AI. The question is whether we are ready to see what the vetting reveals about ourselves. We are terrified that the machine might do something terrible, forgetting that the machine only knows what we taught it. We are staring into the digital abyss, and for the first time, the abyss is asking for a permit to stare back.

The ink on these policies isn't just about code. It’s about the soul of the next century. We are deciding, right now, if we are the masters of our tools or merely their first victims. The vetting process is a desperate, human attempt to keep the ghost in the machine long enough to make sure it’s a ghost we can live with.

The hum of the ventilation continues. Elias hits save. The world waits.